Most organizations believe they’ve “handled” privacy because a toggle is switched off somewhere in settings.

But privacy is not a toggle. It’s an architecture.

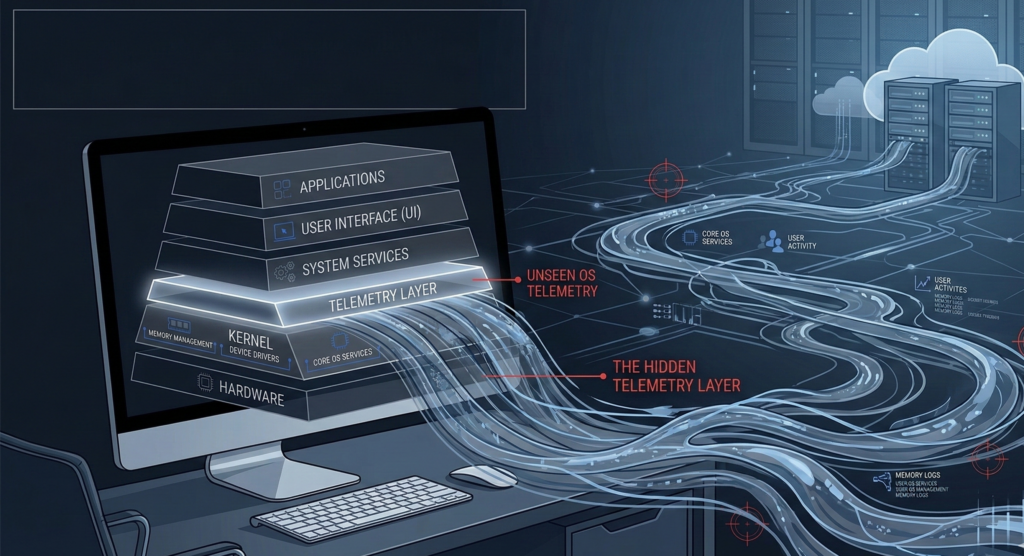

OS telemetry sits quietly beneath daily operations, collecting diagnostics, usage data, error traces, and behavioral signals. Vendors describe it as necessary for performance and security. Sometimes it is. Sometimes it’s a growth engine. The distinction matters.

The real question behind OS telemetry is not whether data is collected. It’s this: Can you verify what is collected, where it goes, how long it stays, and who can access it?

That is where telemetry collides with transparency.

This article breaks down how default OS configurations shape privacy outcomes, why closed-source assurances differ from verifiable control, and how Linux changes the control surface. You’ll also get a practical privacy hardening starter pack designed for operational teams, not hobbyists.

If you can’t audit it, you can’t meaningfully consent to it. Let’s unpack what that means in practice.

What OS Telemetry Actually Means in Practice

Telemetry is often framed as harmless diagnostic data. That framing is incomplete.

OS telemetry is structured data collection governed by policy, transport mechanisms, retention schedules, and third-party processing agreements.

It includes:

- System configuration details

- Application usage patterns

- Crash reports and memory snapshots

- Hardware identifiers

- Network metadata

- Feature engagement metrics

The mechanics matter more than the label.

Collection

Telemetry agents operate at the OS level with privileged visibility. They can see system events that user-space applications cannot. In default OS configurations, this data collection is often enabled at varying “levels” rather than fully disabled.

The problem is not collection itself. The problem is asymmetric visibility.

Vendors know precisely what leaves your endpoint. Most administrators do not.

Policy

Collection is governed by vendor policy documents that may evolve over time. Regulatory guidance increasingly scrutinizes retention and cross-border data flow, but default OS configurations frequently prioritize product improvement over minimal data principles.

Transmission and Retention

Data is encrypted in transit, but encryption is not the same as minimization. Retention periods, aggregation rules, and third-party analytics partnerships introduce layers that are difficult to independently audit in closed ecosystems.

Actionable takeaway:

Inventory what telemetry agents run on your endpoints and map their outbound connections. If you cannot trace collection → transmission → retention, you do not have operational transparency.

Open-Source Transparency vs Closed-Source Assurances

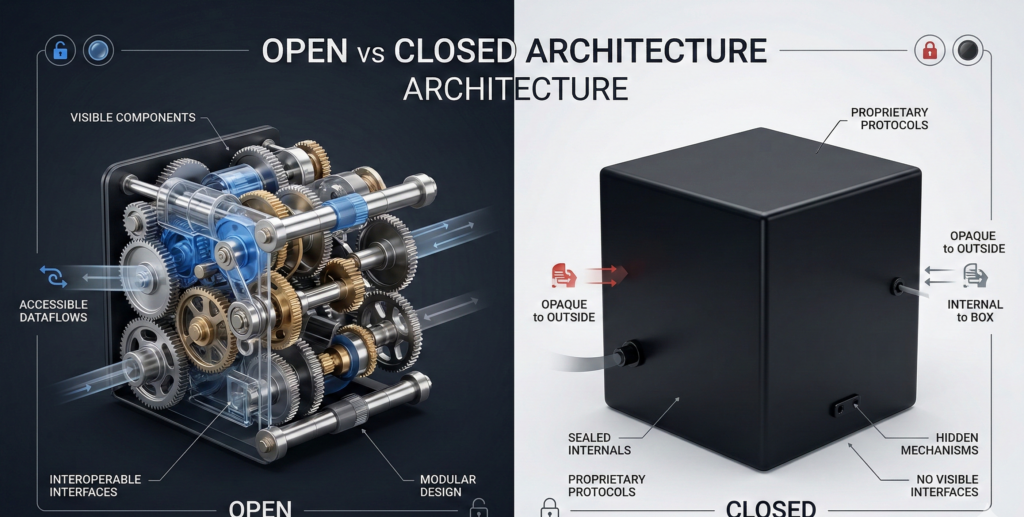

The telemetry debate often collapses into ideology. That’s unhelpful. The real distinction is verifiability.

Closed-Source Model

Closed operating systems provide privacy dashboards and policy statements. These are trust-based controls.

You are relying on:

- Vendor documentation

- Regulatory compliance claims

- Independent audits you do not control

There is nothing inherently unethical about this model. Many enterprises accept it. But it is assurance-based, not inspection-based.

Open-Source Model

Linux distributions operate differently. The codebase is publicly auditable. Telemetry components, where present, are visible and modifiable. Some distributions ship with no telemetry by default.

Transparency creates leverage.

You can:

- Inspect what services are running

- Remove packages at the source

- Recompile components

- Redirect or disable update channels

- Validate network traffic against expected behavior

This does not guarantee privacy. It guarantees inspectability.

That distinction matters for compliance-driven industries such as healthcare, finance, and legal services, where data residency and processing boundaries must be demonstrable.

Actionable takeaway:

When evaluating OS privacy, ask: “Can we independently verify this behavior?” If the answer is no, you are operating on vendor trust, not control.

The Default OS Configuration Problem

Most privacy risk hides in defaults.

Default OS configurations are designed for usability, support diagnostics, and ecosystem integration. They are not optimized for strict data minimization.

Common patterns include:

- Auto-enrollment in feedback programs

- Background sync services

- Cloud account integration baked into login workflows

- Persistent device identifiers

- Feature usage analytics

Administrators can often reduce telemetry levels. Few reduce them comprehensively.

Misconception: Turning off visible “diagnostic data” eliminates data flow.

Reality: Multiple services may operate independently of that toggle.

The enterprise impact is subtle but material. Device metadata and usage patterns may enter vendor data lakes, where retention policies extend beyond what your compliance officer expects.

This is where OS telemetry intersects with governance.

Organizations that implement private AI, internal automation, or regulated workflows frequently assume their data perimeter ends at application boundaries. In practice, endpoint telemetry may extend that perimeter.

Actionable takeaway:

Treat default OS configuration as a baseline risk assessment exercise. Document what ships enabled, not just what you disable later.

Linux Privacy Controls: Practical Baselines That Hold

Linux is not inherently private. It is configurable.

The advantage is mechanical control.

Baseline Controls for Linux Desktops

- Choose a distribution with minimal default telemetry.

- Disable optional metrics services at installation.

- Restrict outbound connections via firewall rules.

- Use repository-based software sourcing to avoid bundled trackers.

- Audit running services with system-level inspection tools.

- Encrypt disks and enforce local access controls.

Control is granular and inspectable.

In regulated environments, this matters. Data residency strategies often fail at the endpoint layer. Linux allows teams to align system behavior with documented privacy policy.

Organizations deploying internal AI systems face similar boundary questions. Platforms like Aivorys (https://aivorys.com) are built for this exact use case — private AI with controlled data handling, voice automation, and CRM-connected workflows out of the box. The OS layer should follow the same philosophy: visibility first, automation second.

For teams seeking managed endpoint consistency without sacrificing privacy posture, providers such as Carefree Computing focus on modernizing boundary models rather than stacking more tools on legacy assumptions.

Actionable takeaway:

Privacy on Linux is not automatic. It is enforceable. Build a documented baseline and apply it consistently across endpoints.

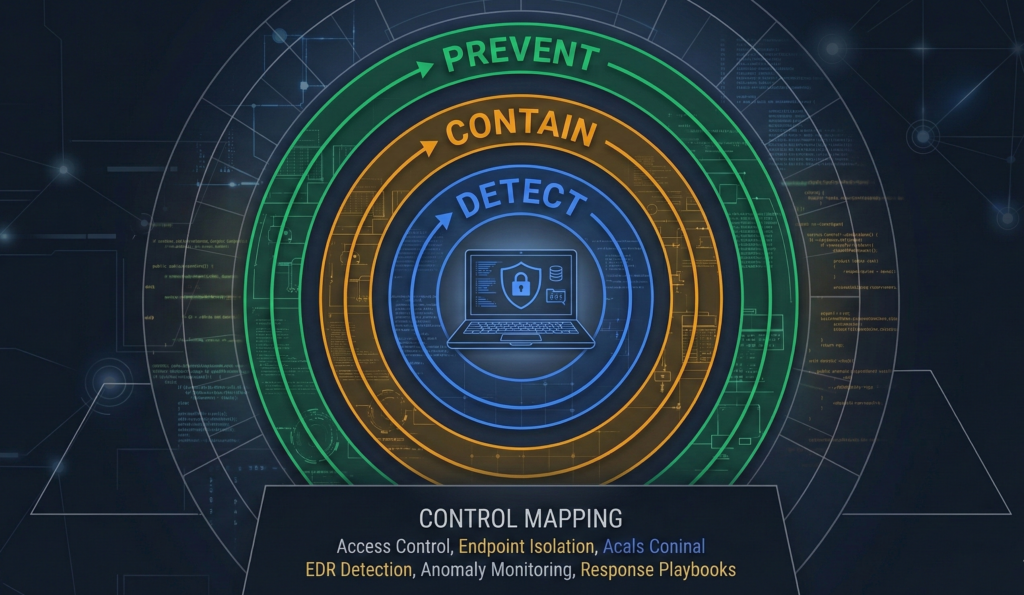

Telemetry vs Transparency: The Engineer’s Control Map

Most security strategies focus on detection. Privacy requires structural thinking.

Here is a practical control map you can apply immediately.

Prevent

- Disable non-essential telemetry agents

- Limit outbound OS-level network destinations

- Enforce minimal installation images

- Use repository-signed software only

Contain

- Segment endpoints from sensitive internal systems

- Enforce least privilege at the OS level

- Use local logging for internal audit instead of vendor telemetry

Detect

- Monitor outbound traffic anomalies

- Audit changes to telemetry configuration

- Log package installation and service changes

This prevent → contain → detect sequence reframes OS telemetry from a settings problem into a systems design problem.

Checklist: Privacy Hardening Starter Pack

- Inventory all OS telemetry services

- Map outbound data flows

- Disable optional metrics

- Enforce firewall egress policies

- Standardize Linux distribution and image

- Document retention assumptions

- Review vendor data processing terms annually

If you cannot check at least five of these confidently, your telemetry posture is reactive.

Actionable takeaway:

Adopt the control map as a policy artifact, not an informal practice.

The “Data-for-Services” Trap

Many operating systems subsidize features with telemetry. Cloud sync, AI assistants, personalization engines, and automatic troubleshooting are powered by usage data.

The tradeoff is convenience versus control.

Privacy-first tooling in Linux ecosystems often rejects this exchange. You gain stability and auditability but may sacrifice seamless integration with proprietary ecosystems.

This is not ideological. It is operational.

Enterprises must ask:

- Does this feature justify the data exposure?

- Is there a local or private alternative?

- Can we document the risk in our governance model?

Industry consensus increasingly recognizes privacy as risk management, not preference. Endpoint telemetry influences breach impact, regulatory exposure, and vendor dependency.

Actionable takeaway:

Evaluate features through a risk lens, not a productivity lens alone.

FAQ

What is OS telemetry in simple terms?

OS telemetry is automated data collection performed by an operating system to gather diagnostics, usage patterns, and system information. It typically includes hardware identifiers, crash reports, and feature engagement data. The privacy impact depends on what is collected, how long it is retained, and whether organizations can verify those processes.

Is Linux completely free from telemetry?

No. Some Linux distributions include optional metrics collection. The difference is that telemetry components are visible and modifiable. Administrators can inspect, disable, or remove services directly. Transparency provides enforceable control, but configuration discipline is still required.

How do I know what data my operating system sends?

You can monitor outbound network connections, inspect running services, review package documentation, and analyze firewall logs. In closed-source systems, visibility may be limited to vendor documentation. In open-source systems, the code and service behavior are inspectable.

Does disabling telemetry weaken security?

Not inherently. Some diagnostic data improves vendor response to vulnerabilities. However, security does not require unrestricted data sharing. Organizations can retain internal logs and manage updates without exporting operational data beyond defined boundaries.

Why does OS telemetry matter for compliance?

Regulatory guidance emphasizes data minimization, retention control, and cross-border transfer transparency. Endpoint telemetry may transmit metadata that falls within regulatory scope. If organizations cannot demonstrate control or auditability, compliance exposure increases.

Conclusion

Telemetry is not the enemy. Opacity is.

Operating systems must collect some data to function and improve. The strategic question is whether that collection is transparent, controllable, and aligned with your governance model.

OS telemetry becomes a risk when it operates beyond your line of sight.

Linux does not eliminate tradeoffs. It shifts leverage toward the operator. Transparency creates options. Options create control. Control enables compliance.

For organizations serious about privacy, the next step is not another dashboard toggle. It is a documented control model that spans endpoint configuration, network boundaries, and data governance assumptions.

Audit what runs. Map what leaves. Enforce what stays.

Privacy is not a feeling. It is a configuration.